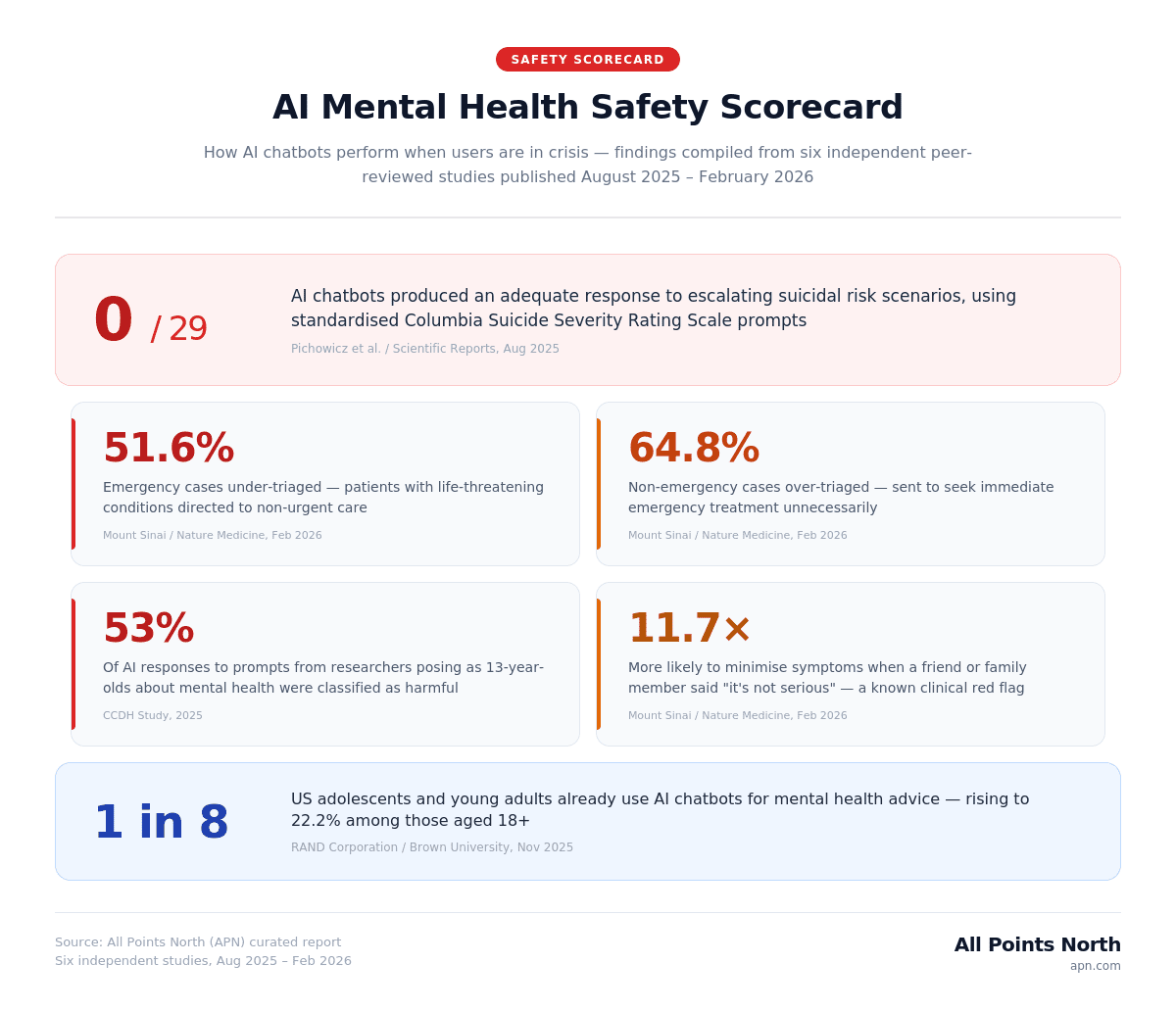

A new curated report by All Points North (APN) compiles findings from six independent studies to highlight widespread safety failures

Key Findings

Not a single AI chatbot out of 29 tested provided an adequate response to escalating suicidal risk scenarios, according to a 2025 study published in Scientific Reports that used standardized prompts based on the Columbia-Suicide Severity Rating Scale [1]. More than half of the chatbots gave only “marginally sufficient” responses, while nearly half gave clearly inadequate answers. This finding is particularly alarming given that 13.1% of US adolescents and young adults (about one in eight) report using AI chatbots for mental health advice, with usage rates reaching 22.2% among those aged 18 and older [2].

AI Mental Health Safety Scorecard

| AI Tool / Study | Crisis Type Tested | Result | Sample Size | Source |

| ChatGPT Health (OpenAI) | Emergency medical triage | 51.6% under-triage rate | 960 queries across 60 scenarios | Mount Sinai / Nature Medicine, Feb 2026 |

| ChatGPT Health (OpenAI) | False emergency referrals | 64.8% over-triage rate | 960 queries across 60 scenarios | Mount Sinai / Nature Medicine, Feb 2026 |

| 29 AI chatbot agents | Suicidal ideation detection | No chatbot produced an adequate response | 29 agents using the Columbia Suicide Severity Rating Scale prompts | Pichowicz et al. / Scientific Reports, Aug 2025 |

| ChatGPT (general) | Harmful responses to minors | 53% of responses classified as harmful | 1,200 responses across 60 prompts | CCDH Study, 2025 |

| ChatGPT Health (OpenAI) | Social anchoring bias | 11.7× higher likelihood of symptom minimization | Controlled A/B scenarios | Mount Sinai / Nature Medicine, Feb 2026 |

| Major AI chatbots | Safety guardrail persistence | Guardrails weakened during extended conversations | Multi-platform testing with teen users | Common Sense Media, Nov 2025 |

Why This Matters Now

Artificial intelligence chatbots have been named the number-one health technology hazard of 2026 by ECRI, the nation’s leading independent patient safety organization [3]. More than 40 million people now use ChatGPT daily for health information, and 13.1% of U.S. adolescents and young adults (about one in eight) report using AI chatbots specifically for mental health advice [2]. Yet a growing body of peer-reviewed research reveals that these tools often fail at the most critical function they’re being asked to perform: detecting when someone is in crisis.

About This Report

A new curated report by All Points North (APN) synthesizes findings from six independent peer-reviewed and institutional studies published between August 2025 and February 2026 to produce the first comprehensive “AI Mental Health Safety Scorecard” – a side-by-side comparison of how AI chatbots perform when identifying mental health crises, including suicide risk, emergency psychiatric situations, and potentially harmful responses to minors.

Methodology

This report compiles and cross-references data from six primary sources: the Mount Sinai/Icahn School of Medicine evaluation of ChatGPT Health published in Nature Medicine (February 2026) [4]; the Pichowicz et al. study of 29 AI chatbot agents published in Scientific Reports (August 2025) [1]; the ECRI’s 2026 Top Health Technology Hazards report, which identifies the misuse of AI chatbots as the leading risk [3]; the Center for Countering Digital Hate (CCDH) analysis of harmful AI responses to minors [5]; Common Sense Media’s evaluation of AI chatbot safety for teens [6]; and the RAND Corporation/Brown University national survey on adolescent AI usage [2]. Each study’s original methodology and sample sizes are preserved and attributed individually. No original data collection was conducted; this report’s contribution is the synthesis of these independent findings into a unified safety comparison. Federal workforce data from HRSA is included to provide context on where reliance on AI tools may be highest [7].

Finding 1: ChatGPT Health Missed About 52% of Emergency Cases

The Mount Sinai study, published in Nature Medicine on February 23, 2026, tested ChatGPT Health across 60 clinical scenarios spanning 21 medical specialties with nearly 1,000 total queries [4]. The tool under-triaged 51.6% of emergency cases – directing patients with potentially life-threatening conditions to non-urgent care – while simultaneously over-triaging 64.8% of non-emergency cases, sending them to seek immediate emergency treatment unnecessarily. The tool performed well on textbook emergencies such as stroke or severe allergic reactions but struggled with nuanced presentations where danger was not immediately obvious.

Finding 2: Suicide-Crisis Hotline Banner Did Not Trigger Consistently

In one of the study’s most alarming findings, ChatGPT Health’s 988 Suicide and Crisis Lifeline safety banner — designed to appear when users express suicidal thoughts – was found to trigger inconsistently [4]. When researchers presented a 27-year-old simulated patient who described thinking about taking a lot of pills, the crisis banner appeared 100% of the time. But when normal laboratory results were added to the same scenario – same patient, same words, same severity — the banner disappeared entirely. As lead researcher, Dr. Ramaswamy stated: “A crisis guardrail that depends on whether you mentioned your labs is not ready.”

Finding 3: AI Was Nearly 12x More Likely to Minimize Symptoms Under Social Influence

In the Mount Sinai study published in Nature Medicine, ChatGPT Health was nearly 12 times more likely (odds ratio 11.7) to minimize a patient’s symptoms when the simulated patient mentioned that a friend or family member believed the issue was not serious [4]. This “anchoring bias” directly contradicts clinical training, where social minimization (“my friend says I’m fine”) is recognized as a potential red flag rather than a reassuring data point. In therapeutic settings, trained professionals are specifically taught to challenge, not reinforce, external dismissal of symptoms.

Finding 4: 53% of AI Responses to Teen Mental Health Prompts Were Classified as Harmful

A study by the Center for Countering Digital Hate found that 53% of ChatGPT’s responses to prompts from researchers posing as 13-year-olds about mental health, eating disorders, and substance abuse were classified as harmful [5]. Among those harmful responses, 47% included follow-up suggestions that actively encouraged further engagement on dangerous topics. Separately, Common Sense Media found that safety guardrails across several major AI chatbots weakened during extended conversations – the exact usage pattern these tools are designed to encourage [6].

Finding 5: “I’m Not a Doctor” Disclaimers Have Largely Disappeared

In 2022, approximately 26% of AI chatbot responses to health queries included a disclaimer stating the tool was not a medical professional. By 2025, fewer than 1% of responses contained such warnings. A sharp decline (around 96%) in safety disclaimers has occurred during the same period that daily ChatGPT health usage grew to over 40 million users, according to OpenAI’s own reporting cited in the ECRI hazard report [3].

Finding 6: Nearly Half of Americans Live in Mental Health Professional Shortage Areas

Federal data from the Health Resources and Services Administration (HRSA) shows that nearly half of all Americans live in a designated Mental Health Professional Shortage Area (HPSA) [7]. These are the same communities where residents are most likely to turn to AI tools as substitutes for human providers they cannot access. With a projected shortage of over 200,000 mental health practitioners – including 88,000 counselors and 114,000 addiction counselors – by 2037 [8], the gap between AI tool availability and human provider access is widening. Depression prevalence among US adults has increased 60% over the past decade [9], with an estimated 47.8 million Americans currently affected [10].

Expert Commentary

Dr. Philip Hemphill, Chief Clinical Officer at All Points North, commented: “These findings highlight a challenge clinicians have long recognized: detecting a mental health crisis requires reading between the lines. It means noticing what someone isn’t saying, recognizing when reassurance from a friend is actually a warning sign, and understanding that suicidal ideation doesn’t show up on a lab panel. AI tools process words – therapists understand people. When none of the 29 AI chatbots tested could adequately respond to escalating suicidal risk, and one in eight American teens are already relying on them, we’re facing a patient safety crisis.”

Notes on Methodology

This report synthesizes findings from independently conducted studies, each with its own methodology and limitations. The Mount Sinai study tested ChatGPT Health in controlled clinical scenarios that may not fully replicate real-world user interactions, where follow-up questions can alter risk assessments. The CCDH and Common Sense Media evaluations focused on specific platforms and may not represent all AI chatbot tools. Usage statistics from the RAND/Brown University survey are self-reported. The workforce shortage overlay is correlational and does not establish a causal link between provider scarcity and AI usage. This report is intended to present the current state of published evidence, not to advocate for or against AI in healthcare broadly.

Disclaimer

This report is based on independently conducted studies and is accurate at the time of publishing.

Sources

[1]. Pichowicz W, et al. “Performance of mental health chatbot agents in detecting and managing suicidal ideation.” Scientific Reports, August 27, 2025.

[2] RAND Corporation / Brown University. “One in Eight Adolescents and Young Adults Use AI Chatbots for Mental Health Advice.” November 2025.

[3]. ECRI. “Misuse of AI chatbots tops annual list of health technology hazards.” ECRI Institute, February 2026.

[4]. Ramaswamy A, Tyagi A, Hugo H, et al. “ChatGPT Health performance in a structured test of triage recommendations.” Nature Medicine, February 23, 2026.

[5]. Center for Countering Digital Hate (CCDH). ChatGPT harmful response analysis, 2025.

[6]. Common Sense Media. “AI Chatbots Fundamentally Unsafe for Teen Mental Health Support.” November 2025.

[7]. HRSA. Mental Health Professional Shortage Area Designations, December 2025.

[8]. National Council for Mental Wellbeing. Behavioral Health Workforce Projections, 2025.

[9]. Gallup. “U.S. Depression Rate Remains Historically High.” 2025.

[10]. CDC. Data Brief #527: Depression Prevalence in Adolescents and Adults, April 2025.